From Tools to Systems: The Real Shift in AI-Native GTM

Why the teams booking 400+ meetings per quarter aren’t working harder

Something happened to go-to-market over the past three years that nobody predicted. If you asked the average sales leader in 2023 what their stack would look like in 2026, they would have described an incremental upgrade on what they already had. Better CRM. Smarter email tools. Maybe some light automation. Nobody described what actually happened.

We didn’t get better tools. We got a new architecture for how revenue gets generated. And the teams that understood this early are now operating at a level that looks almost unfair from the outside. They’re booking meetings at 5-10x the rate of traditional teams, often with smaller headcount. The gap is widening every quarter.

The fundamental shift can be stated simply: we moved from running campaigns to designing systems. That’s the entire story. Everything else is detail.

Three eras in two paragraphs

The first era (roughly 2010-2018) was point solutions. A CRM. An email tool. A dialer. A data provider. Scale meant hiring more people, because you could only send as many emails as your SDRs could write and make as many calls as your team could dial. The second era (2018-2023) brought workflow automation. Zapier, Make, early sales engagement platforms. Teams that could design effective workflows gained real advantages, but those workflows were static. They followed predetermined paths and couldn’t adapt when conditions changed.

We’re now in the third era. The introduction of capable language models changed the game because suddenly we could build systems that reason, adapt, and improve. The limiting factor is no longer bandwidth or orchestration. It’s architecture. The teams winning aren’t just using AI tools. They’ve woven AI into every layer of the revenue engine, and the result is a fundamentally different kind of GTM motion.

The mental model that actually matters

I’ve watched teams implement identical toolsets with completely different outcomes. The gap isn’t technical competence. It’s how they think about the problem.

The old mental model asks: “How do I do this task faster?” That leads to decisions like “we need an email tool that lets us send more emails” and “we need a dialer that helps SDRs make more calls.” The focus is doing more of the same thing, faster. It’s a productivity frame.

The new mental model asks: “How do I design a system that achieves this outcome?” That leads to decisions like “we need a system that identifies the right accounts at the right time and engages them through the right channel with the right message.” The focus is on system outputs, not individual task speed. It’s an architecture frame.

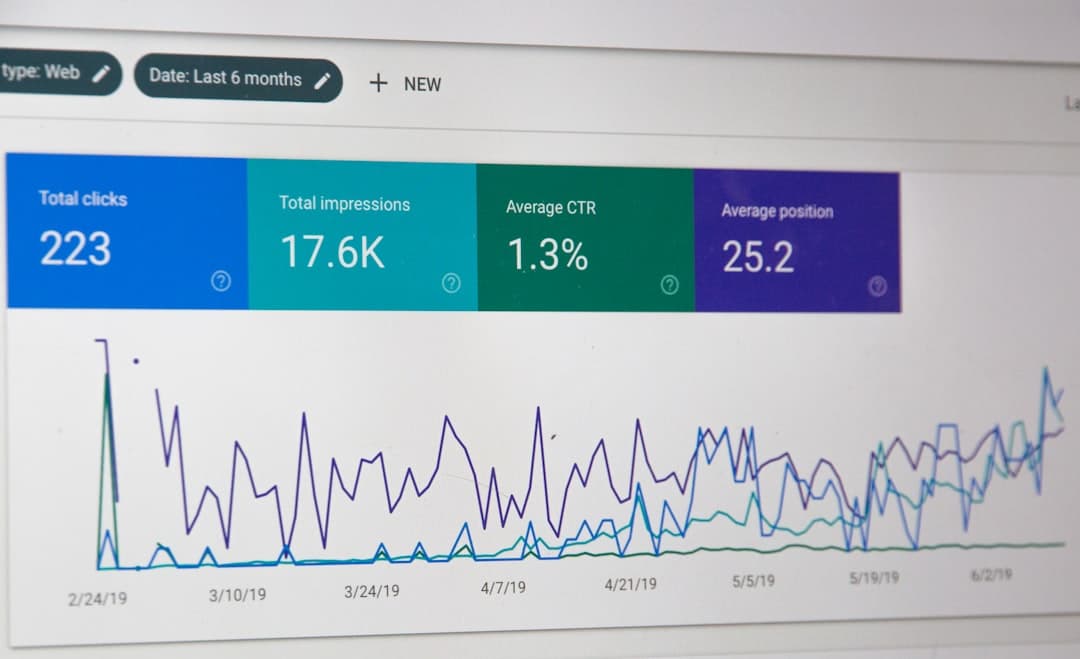

This distinction sounds academic until you see the numbers. Teams running the old model with good tools get 2-3% reply rates and 50-75 meetings per month per SDR. Teams running the new model, often with comparable tool spend, get 8-15% reply rates and 200-400 meetings per month with two or three people. The tooling cost in both scenarios is similar. The difference is the architecture sitting underneath.

The reason this isn’t obvious to most operators is that tool vendors have every incentive to sell productivity gains. “Send 10x more emails” is an easy pitch. “Redesign your entire GTM architecture around a unified data layer” is a hard one. So the conversation stays at the tool level, and teams keep swapping email platforms every six months wondering why their reply rates haven’t moved.

What the system actually looks like

When I work with teams on their GTM architecture, I think about it in five layers. The order matters, and you can’t fix a downstream layer if an upstream one is broken.

Data foundation. First-party data from your CRM, product, and website. Second-party data from partners, review sites, and social engagement. Third-party data from firmographic, technographic, and intent providers. The quality of this layer determines the ceiling for everything above it. No amount of AI sophistication compensates for bad data.

Intelligence layer. Enrichment, scoring, and routing. This is where AI has the most immediate impact. Research agents that build account profiles in minutes instead of the 30+ minutes an SDR would spend. Scoring models that evaluate fit, timing, and signal strength simultaneously. Routing logic that decides which accounts get high-touch treatment and which get automated sequences. The intelligence layer is where raw data becomes actionable knowledge.

Execution layer. Email infrastructure with warmed domains, deliverability management, and sequencing. LinkedIn automation with safety limits and multi-account coordination. Phone systems with AI-prepared call context. Content delivery and proposal generation. This is the layer most teams overspend on, because execution tools are easy to buy. The problem is that execution quality is mostly determined by the layers above it, not by the tool itself.

Orchestration layer. This is the one most teams skip entirely, and it’s the one that separates the teams booking 100 meetings from the teams booking 400. Orchestration coordinates activity across channels and over time. It ensures prospects get a coherent multichannel experience rather than disconnected touches from different systems. When a prospect opens an email, the orchestration layer might trigger a LinkedIn connection request the next day and queue a phone call for day three. When a prospect visits the pricing page after receiving an email, the orchestration layer escalates them to the high-touch cadence. Without orchestration, you have multiple tools running in parallel with no coordination. With it, you have a revenue engine.

Optimization layer. Performance measurement, attribution, A/B testing, feedback loops. The system learns and improves. Which signals predict closed-won deals? Which messages get responses? Which cadences produce meetings? The optimization layer answers these questions and feeds insights back into the intelligence and execution layers.

Why systems compound and tools don’t

Individual tools produce linear returns. A better email tool means slightly more meetings. A better dialer means slightly more conversations. The returns are real but they’re capped by the quality of everything around them.

Systems produce compounding returns. A well-designed system gets better over time as it accumulates data, refines its scoring models, and learns from outcomes. The team that built their system six months ago has a genuine advantage over the team starting today, and that gap widens daily. This is the part that makes systems thinking so important at a strategic level. Once a competitor builds a compounding revenue system and you haven’t, catching up becomes exponentially harder with every passing quarter.

There’s a secondary effect that’s harder to quantify but equally important. Systems can exhibit emergent behaviors that nobody explicitly programmed. A well-designed GTM system might discover that accounts in a certain industry respond better to LinkedIn before email, or that prospects who visit the pricing page twice within a week convert at 3x the rate of single visitors. These patterns emerge from the data as the system accumulates interactions. No human sat down and wrote a rule for it. The architecture created the conditions for the pattern to surface.

I keep thinking about a specific pattern I’ve seen at least a dozen times. A team starts with a modest data foundation and basic AI enrichment. After three months, their scoring model has enough signal history to meaningfully predict which accounts will convert. After six months, they’ve accumulated enough outcome data to optimize their entire cadence. After a year, their system is making routing and prioritization decisions that would take a human analyst weeks to arrive at manually. None of this was explicitly programmed. It emerged from the architecture. A tools-based team that started at the same time is still running the same static sequences they launched with, because tools don’t learn. They just execute.

The coordination problem nobody talks about

The biggest tax on most revenue teams is coordination overhead. They have good tools. They have capable people. But the energy required to move data between systems, trigger the right workflows, ensure everything stays synchronized, and maintain handoffs across functions eats up an enormous percentage of capacity.

Consider what happens when a qualified prospect visits your pricing page. In a tool-based world, that signal needs to be captured by your website visitor identification tool, matched to an account in your data platform, enriched through a third-party provider, scored (often in a spreadsheet or CRM formula), routed to the right rep via email or Slack, and then the rep needs to pull up the visitor’s history across multiple systems before crafting a response. Each handoff is a point of failure. Each one costs time. In many organizations, the total elapsed time from pricing page visit to personalized outreach is measured in days. I’ve seen orgs where it takes a full week. By that point, the prospect has already talked to two competitors.

In a systems-based world, this entire flow happens automatically within minutes. The signal is captured, the account is matched and enriched, the score is computed, the routing happens, and the outreach is triggered with full context. The rep’s involvement starts at the point where human judgment adds the most value, which is usually the conversation itself, not the preparation for it.

This is why teams running systems don’t just perform marginally better. They operate in a different category. The coordination problem doesn’t shrink. It mostly disappears.

Where context engineering fits

The evolution from prompt engineering to context engineering is one of the more consequential shifts I’ve tracked over the past two years, and it maps directly onto the tools-to-systems transition.

Prompt engineering treats each AI interaction as largely independent. You craft the perfect prompt for a specific task, get the output, and move on. It’s the AI equivalent of the tool mindset.

Context engineering treats AI interactions as part of a system. The prompt itself is a small fraction of the total context. The rest is conversation history, retrieved documents, tool outputs, system state, and dynamic data. The skill is understanding what information an AI system needs to perform well and designing the infrastructure that provides it reliably.

The practical implication: the teams that are building context-aware AI systems, where agents have access to account research, interaction history, scoring data, and real-time signals, are getting dramatically better outputs than teams running the same models with generic prompts. A research agent with access to a prospect’s LinkedIn activity, recent company news, technology stack data, and your previous interaction history produces outreach that reads like a thoughtful human wrote it. The same model with just a name and company produces something that reads like a mail merge. The context is the product. The model is almost interchangeable.

Recent research on what’s being called “agentic context engineering” has shown that systems designed this way can achieve 10%+ performance improvements on benchmark tasks without any model fine-tuning. The system gets smarter by managing context better, not by changing the underlying model. That’s a big deal for GTM teams, because it means you don’t need a machine learning team to build an intelligent revenue system. You need good architecture.

What this means if you’re building right now

If you’re standing up a GTM motion today, the single most important decision you’ll make isn’t which email tool to buy or which AI model to use. It’s whether you’re going to assemble a collection of tools or design a system.

The tool approach is faster to start. You can have a sequence running in a day. But you’ll hit a ceiling quickly, and that ceiling is coordination. You’ll spend increasing amounts of time and money stitching things together, moving data around, and managing handoffs that a well-designed system would handle automatically.

The systems approach takes longer to build. Maybe six to eight weeks before it’s producing results comparable to a tool-based setup. But after that initial investment, it compounds. Every month, the system gets a little smarter, a little faster, a little better at identifying the right accounts and engaging them effectively. The gap between tool-based teams and system-based teams won’t close. It will widen.

For most teams, the practical starting point is the data layer. Get your account and contact data clean, enriched, and scored before worrying about outreach automation. Then build the intelligence layer: the AI agents that research accounts, compute scores, and make routing decisions. Then add execution and orchestration on top of a solid foundation. The temptation is to start with execution because it feels productive. I get it. Buying an email tool and loading a sequence produces visible activity on day one. But visible activity without targeting precision and signal-based timing is just noise with good formatting. The teams that start with data and intelligence look slower for the first eight weeks. By month four, they’re operating at a level that the email-first teams can’t reach regardless of how many subject lines they A/B test.

The industry will continue to sell tool-level solutions because that’s what’s easy to package and demo. A new email platform gets a 30-minute slot at SaaStr and a slick product page. “Redesign your revenue architecture from the data layer up” doesn’t fit on a trade show banner. But the real leverage lives in the architectural decisions, not the tool selection. The organizations that figure this out will operate at a fundamentally different efficiency than the ones still swapping tools every quarter hoping for different results.

The teams that will win over the next three to five years are the ones that internalize this distinction between tools and systems at a strategic level. Not as a buzzword, but as an actual design principle that governs how they allocate budget, hire engineers, and make architectural decisions. The tools era produced linear companies. The systems era is producing compounding ones. And once you fall behind a compounding competitor, the math to catch up gets worse every quarter you wait.

Enjoying this essay?

Written by

Elom

GTM and Growth engineer with 12 years across Fortune 500s, fintech, and B2B startups. Building at the intersection of AI, data, and revenue.

Get the next deep-dive in your inbox

Essays on GTM, growth engineering, and what's actually working. Free.