The Agentic GTM Stack Is a Trap (Unless You Do This First)

Every GTM agency is demoing Claude Code + MCPs as their differentiator. But tool consolidation without strategy consolidation just centralizes bad decisions faster. Here is what to do first.

Every GTM agency on LinkedIn is running the same demo right now. Open a terminal. Fire up Claude Code. Connect a few MCPs. Build an outbound campaign in 20 minutes. The crowd goes wild. 1,400 people watch the livestream. The playbook drops. The GitHub repo gets forked 300 times.

And then nothing changes.

I have been watching this convergence accelerate for months. The tool consolidation narrative has fully taken hold. The pitch has shifted from “here is my best stack” to “I run everything from one terminal.” It sounds compelling. It looks modern. And for most teams adopting it, it is going to centralize bad decisions faster than they have ever been centralized before.

The Convergence Problem

A recent survey of 62 GTM leaders tells a clear story. Clay sits at 71% adoption. n8n is at 48%. Instantly is at 35%. HeyReach is at 35%. Lovable is at 29%. These are not suggestions from a vendor pitch deck. These are the tools that actual operators are running in production.

The convergence is real. The top of the GTM market is consolidating around a remarkably narrow set of tools. And now, layered on top of that, is the Claude Code + MCP layer that promises to wire them all together from a single interface. One terminal to rule them all.

Here is the problem nobody is talking about: when everyone runs the same stack, the stack stops being a differentiator. If your competitor is running Clay into Instantly through n8n with Claude Code orchestrating the whole thing, and you are running the exact same pipeline, what is your edge?

The answer is not the tools. It never was.

Tool Consolidation Without Strategy Consolidation

I have spent enough time building revenue systems to know that the failure mode here is predictable. Teams see a live demo. They get excited about consolidation. They rip out four tools and replace them with one orchestration layer. They feel productive because the terminal is fast and the MCP calls are clean.

But they skipped the hard part.

They never mapped their revenue architecture. They never defined what signals actually matter for their ICP. They never built a scoring model that reflects their actual close patterns. They never audited their data flow from signal detection to CRM write-back to understand where value gets created and where it gets lost.

They just made their existing bad process faster.

A 12-tool stack with clear data flow, well-defined handoff points, and a scoring model calibrated to real revenue outcomes will outperform a single-terminal setup with no strategy every time. I do not care how elegant the orchestration looks. Garbage in, garbage out. The speed of the garbage is irrelevant.

What “Agentic” Actually Means (And What It Does Not)

The word “agentic” has become load-bearing in GTM marketing. Every agency is using it. Every pitch deck features it. But most teams using the word are describing automation, not agency.

Real agentic systems make decisions. They evaluate context, weigh options, take actions, and adapt based on outcomes. They have feedback loops. They learn. An agentic GTM system does not just send emails. It evaluates which accounts to prioritize, selects the right channel, crafts contextual messaging, monitors engagement signals, and adjusts its approach based on response patterns.

What most teams are calling “agentic” is a Claude Code script that runs a predetermined sequence of API calls. That is a workflow. A useful one, maybe. But calling it agentic is like calling a dishwasher a chef because it handles the plates.

The distinction matters because it changes what you invest in. If you think agentic means better automation, you invest in prompts and tool connections. If you understand that agentic means decision-making under uncertainty, you invest in data quality, scoring models, feedback loops, and outcome measurement. The first path gets you a faster version of what you already have. The second path gets you something that compounds.

The Architecture Before the Tool

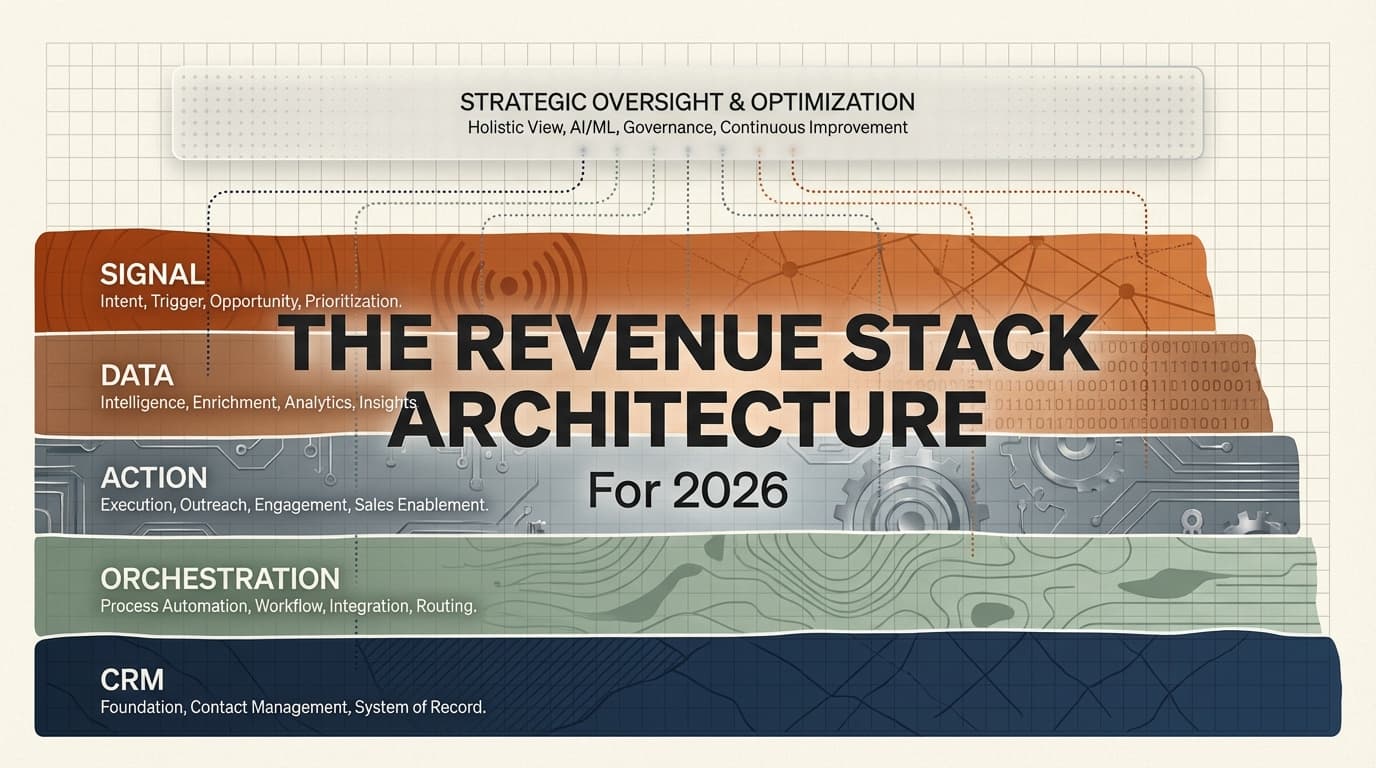

Here is what I recommend before you touch a single MCP configuration. Map your revenue architecture first. Not your tool stack. Your revenue architecture.

Start with signals. What events in the market actually indicate that a prospect is ready to buy? Not “downloaded a whitepaper.” Real buying signals. A company hiring for a role your product replaces. A competitor raising prices. A prospect engaging with three pieces of your content in a week. A technographic change that creates a gap your product fills.

Then map your data flow. Where do those signals get captured? How do they get enriched? What enrichment data actually correlates with close rate? Most teams enrich everything because they can, not because they should. I have seen teams spending $2,000 a month on waterfall enrichment that adds 15 data points per lead, of which 3 actually influence their scoring model. The other 12 are expensive noise.

Then define your action layer. What happens when a signal fires and the data is enriched? Who or what takes the next step? Is it an email? A LinkedIn connection request? A phone call? An ad impression? The answer should vary by account tier, signal strength, and channel history. If every signal triggers the same action, you do not have an action layer. You have a blast cannon.

Then build your orchestration logic. Now, and only now, does the “single terminal” conversation become relevant. The orchestration layer connects signals to data to actions. It manages timing, prevents channel collision, handles deduplication, and enforces business rules. This is where Claude Code and MCPs shine. But they shine because they are executing a well-designed architecture, not because they replaced it.

Finally, close the loop back to your CRM. Every signal, every action, every outcome needs to write back to a system of record. If your CRM does not reflect what actually happened in your pipeline, you cannot learn from it. And if you cannot learn, your “agentic” system is just a very fast manual process.

The Five-Layer Test

Before adopting any new orchestration tool, run this diagnostic on your current system. Score each layer from 0 to 5.

Signal Layer (0-5): Do you have defined buying signals beyond basic firmographic filters? Can you detect real-time changes in account behavior, hiring patterns, or technology adoption? Score 0 if your “signals” are just a static ICP filter. Score 5 if you are monitoring 3 or more signal sources with automated detection.

Data Layer (0-5): Is your enrichment strategy tied to your scoring model? Do you know which enrichment fields correlate with closed deals versus which are just “nice to have”? Score 0 if you enrich everything by default. Score 5 if you have validated which data points predict conversion and only enrich what matters.

Action Layer (0-5): Do your actions vary by account tier, signal type, and channel history? Or does every lead get the same 4-email sequence? Score 0 for uniform sequences. Score 5 for context-aware, multi-channel action selection.

Orchestration Layer (0-5): Can your system coordinate across channels without collision? Does it handle timing, deduplication, and business rules? Score 0 if each channel operates independently. Score 5 if a central orchestrator manages cross-channel coordination.

Feedback Layer (0-5): Do outcomes from your pipeline feed back into your signal detection and scoring? Does the system get smarter over time? Score 0 if you never update your scoring model. Score 5 if outcomes automatically recalibrate your system.

If your total score is below 15, you do not have a tool problem. You have an architecture problem. Adding Claude Code to a system scoring 8 out of 25 will give you a faster 8. That is not progress. That is velocity without direction.

The Real Moat Is Not the Terminal

One agency turned 24 employees into LinkedIn creators. 581 posts in 90 days. 27 new clients. $153K in new MRR. That did not come from a Claude Code demo. It came from a distribution strategy that leveraged human authority at scale.

Another ran outbound and thought leader ads hitting the same accounts simultaneously. $7.8M in pipeline on $233K in ad spend, a 15x ROI. The orchestration mattered, sure. But the strategy of converging paid and outbound on identical account lists is what created the multiplier. The tool just executed it.

A third built free tools on their website using AI. 100,000+ visitors per quarter, 30% from organic search. They also built an AI onboarding system that cut client setup from 4 hours to 12 minutes, then started selling that system as its own product. The moat was not the AI. The moat was seeing onboarding friction as a productizable problem.

In every case, the competitive advantage came from strategic clarity, not tooling. The teams that win are not the ones with the most elegant terminal setup. They are the ones who mapped their revenue architecture first, identified where leverage exists, and then chose tools that amplify that leverage.

Notice a pattern across these examples. The LinkedIn army worked because the team had a distribution thesis: human authority scales trust faster than brand accounts do. The ABM convergence worked because someone mapped the account overlap between outbound lists and ad audiences, then designed the timing to compound impressions. The free tools worked because the team understood that SEO traffic into interactive tools converts at 5 to 10x the rate of SEO traffic into blog posts.

None of these required a specific orchestration layer. All of them required strategic insight that no tool provides out of the box.

The Contrarian Case for Complexity

I want to be fair to the consolidation crowd. There are real cases where reducing tool count improves outcomes. If your team spends 40 minutes per day switching between tabs, losing context, and manually copying data between systems, consolidation saves real hours. If your enrichment pipeline has four manual handoff points where data gets dropped or duplicated, a single orchestration layer eliminates those failure modes.

The issue is not consolidation itself. The issue is treating consolidation as a strategy rather than an implementation detail. The best operators I talk to frame it this way: “We mapped our architecture, identified friction points, and then consolidated the tools at those friction points.” That is the right order. Architecture first, then consolidation where it reduces friction. Not the other way around.

A contrarian data point worth considering: one practitioner recently shared that long-form, highly personal cold emails are outperforming optimized short templates. Another showed that including images in cold email, despite worse deliverability, produces more total replies because the conversion rate jump more than compensates. In both cases, the conventional wisdom that more efficiency equals better results turned out to be wrong. The lesson: operational efficiency and strategic effectiveness are not the same thing. Your agentic stack can make operations efficient. But only your architecture determines whether those operations are effective.

What I Am Betting On

I think the Claude Code + MCP layer is genuinely useful. I use variations of it in my own work. The ability to orchestrate multiple systems from a single interface, with natural language as the control plane, is a real productivity gain.

But I am not betting on it as a differentiator. I am betting on architecture. Specifically, I am investing in three areas.

First, signal quality over signal volume. Most teams are drowning in intent data they do not know how to act on. I would rather have 5 high-fidelity signals that reliably predict buying behavior than 50 weak signals that generate noise. Building the scoring model to separate the two is the real work.

Second, feedback loops that actually close. Most GTM systems are open-loop. Actions go out, some results come back, but the results never feed back into the signal detection or scoring logic. A closed-loop system where outcomes recalibrate the input is what makes a revenue engine compound instead of plateau.

Third, channel-specific content architectures. Not one blog that serves all purposes. Separate content strategies for organic search, AI search, social distribution, and outbound supporting content. Each channel has different mechanics, different buyer states, and different content formats that perform. Treating them as one undifferentiated “content strategy” is a recipe for mediocrity across all of them.

The agentic GTM stack is only a trap if you let the tool replace the strategy. Do the architecture work first. Map your signals, data, actions, orchestration, and feedback loops. Score yourself honestly. Fix the weakest layer before adding new tools.

The operators who get this right will build systems that compound. The ones chasing the latest terminal demo will rebuild their stack again in six months when the next tool drops.

I know which side I want to be on.

Enjoying this essay?

Written by

Elom

GTM and Growth engineer with 12 years across Fortune 500s, fintech, and B2B startups. Building at the intersection of AI, data, and revenue.

Get the next deep-dive in your inbox

Essays on GTM, growth engineering, and what's actually working. Free.