The Revenue Stack Architecture for 2026

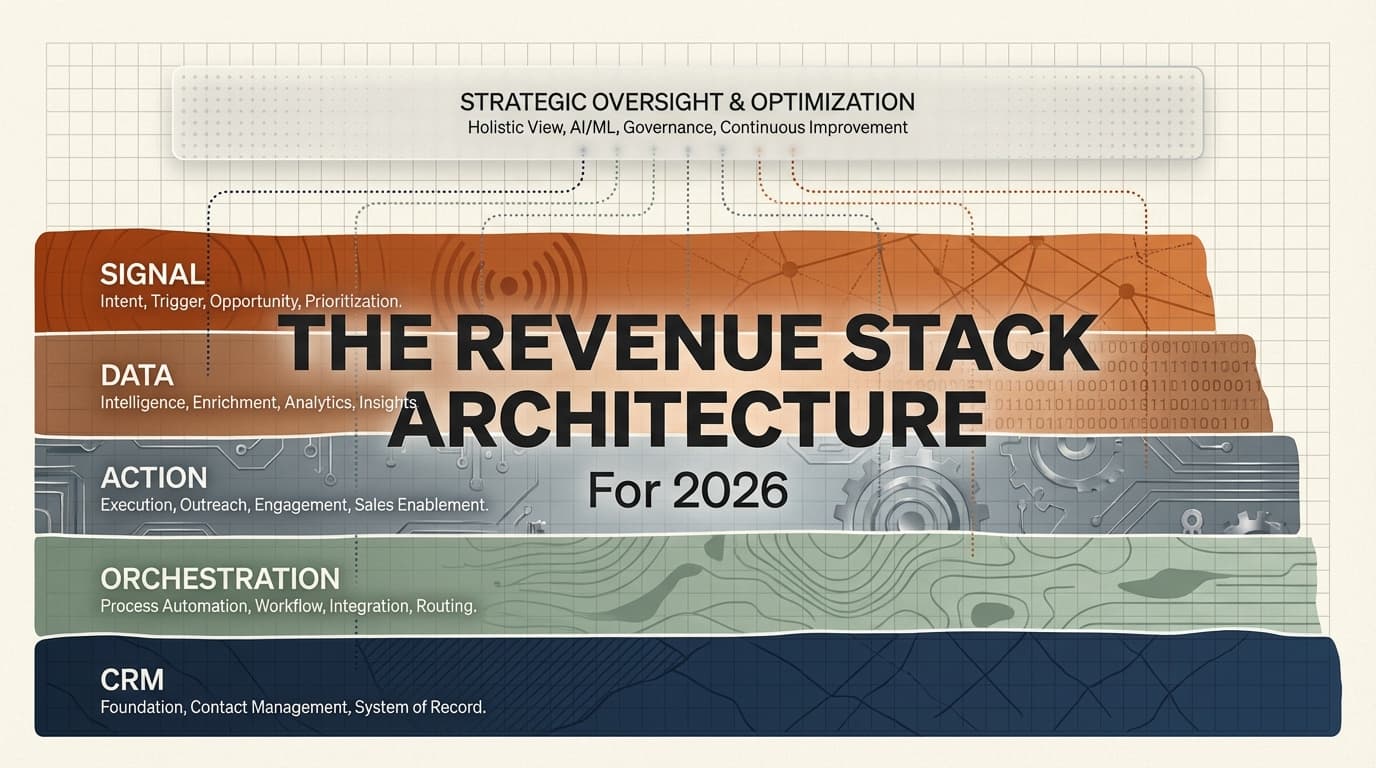

A reference architecture is forming across the best GTM teams. It has 5 layers, but most teams only build 2-3. The real insight is what sits between the layers: data caches, agent orchestration, and feedback loops.

A reference architecture is forming across the best-performing GTM teams in 2026. Not because anyone published a spec or held a standards committee meeting. It formed the way most useful standards form: practitioners kept solving the same problem, and the solutions started converging.

I spent the last quarter analyzing the tech stacks, workflows, and published data from dozens of GTM leaders and operators. I surveyed tool adoption across 62 GTM professionals. I studied what the fastest-growing agencies and in-house teams are actually building. What emerged was not a tools list. It was a layered architecture with clear winners at each level, predictable failure modes when layers are missing, and a pattern that separates teams that compound from teams that just execute.

Here is the architecture. Five layers, one missing layer nobody talks about, and the connective tissue between them that determines whether your stack generates leverage or just generates activity.

The 5-Layer Revenue Stack

The convergence is real. When you study what high-performing GTM teams have built over the past 12 months, the same structural pattern appears regardless of company size, vertical, or go-to-market motion. The layers are:

- Signal. What triggers outreach.

- Data. What you know about the target.

- Action. How you reach them.

- Orchestration. What coordinates the workflow.

- CRM. Where the record lives.

Most teams have built two or three of these layers well. Almost none have built all five. And the gap between a two-layer stack and a five-layer stack is not 2.5x performance. It is an order of magnitude, because the layers interact.

Let me walk through each one with the data on what is winning, what is failing, and where the architecture is heading.

Layer 1: Signal

The signal layer answers one question: who should we talk to right now, and why?

This is the layer most teams skip entirely. They substitute static lists for dynamic signals. They pull 10,000 leads from a database, blast them all, and call it outbound. That worked in 2022. In 2026, the teams generating the highest reply rates are the ones triggering outreach based on real-time events: job changes, funding rounds, tech stack shifts, hiring patterns, product launches, contract expirations.

The signal layer has two subcategories. Intent signals detect when a prospect is actively researching your category. They visit your pricing page, engage with your content, or show up in community channels asking questions about problems you solve. Trigger events detect when something has changed in the prospect’s world that creates an opening. A new VP of Sales starts. The company raises a Series B. They post a job listing for a role that implies they need what you sell.

The tools winning this layer are purpose-built signal aggregators. Community and product-usage signal platforms are gaining adoption for intent detection. Company intelligence APIs that track hiring, funding, and technology changes are becoming standard for trigger events. The key shift is from “who fits our ICP” to “who fits our ICP and has a reason to care right now.”

Teams that skip this layer pay for it downstream. Their action layer has to work harder because it is reaching people with no context and no urgency. Their reply rates sit at 1-2% instead of 5-8%. They compensate by increasing volume, which burns domains and tanks deliverability. It is a death spiral that starts at the signal layer.

Layer 2: Data

The data layer is where the 2026 stack diverges most sharply from the 2024 stack.

Two years ago, data meant “buy a list from a provider.” You picked one vendor, exported contacts, uploaded them to your outbound tool, and hoped the emails were valid. The data layer was thin. One provider, one pull, one shot.

In 2026, the data layer has three components: enrichment, waterfall, and cache.

Enrichment is the process of taking a signal (someone fits our ICP and just raised funding) and building a complete profile. Company data, contact data, technographic data, intent data. The richer the profile, the more personalized the outreach, the higher the conversion.

Waterfall enrichment is the tactical innovation that changed this layer. Instead of relying on a single data provider, the best teams chain 4-8 providers in sequence. Provider A has a 60% match rate. Provider B fills in 15% of the gaps. Provider C catches another 10%. By the end of the waterfall, you have 85-90% coverage instead of 60%. This is not theoretical. It is standard practice among top operators.

The cache is the structural insight that separates good data layers from great ones.

One practitioner I studied caches 23 million verified email records in a Supabase instance. Cost: $30 per month. Every time their waterfall enrichment runs, verified results get stored. The next time they need that contact, they pull from cache instead of paying a data provider again. Over 12 months, this saves thousands of dollars in re-purchasing data they already validated. The cache also functions as a deduplication layer. If a lead has already been contacted by Campaign A, the cache prevents them from entering Campaign B.

This pattern is spreading. The teams that treat their data layer as a depreciating asset (buy once, use once, discard) are paying 3-5x more per enriched lead than teams that treat it as an appreciating asset (buy once, cache forever, reuse across campaigns).

The tool winning this layer is not even close. In the survey of 62 GTM leaders, one enrichment and workflow platform appeared in 71% of stacks. Nothing else comes close. The next most common data tool sits around 29%. When a single tool reaches 71% adoption in a fragmented market, it is no longer a tool choice. It is infrastructure.

But here is the part most people miss: the tool itself is not the advantage. The advantage is what you build on top of it. The API-first operators who connect enrichment directly to their orchestration layer, bypassing the UI entirely, run 10x the volume at a fraction of the manual effort. The ones still clicking through a graphical interface are using a Formula 1 engine to power a golf cart.

Layer 3: Action

The action layer is where outreach happens. Emails get sent. LinkedIn messages land. Ads run. Content publishes.

This is the layer most teams over-invest in relative to the layers above it. They spend weeks perfecting email copy while running on garbage signals and thin data. It is like optimizing the paint job on a car with no engine.

That said, the action layer has its own structural patterns worth understanding.

For cold email execution, two platforms dominate at roughly 35% adoption each among GTM leaders. The market has consolidated from a dozen options to essentially two credible choices, plus a handful of niche alternatives. The differentiator is no longer the platform itself but the infrastructure underneath it: domain rotation, warmup protocols, deliverability monitoring, and send-time optimization.

For LinkedIn outreach, one automation platform holds about 35% adoption. LinkedIn remains the highest-converting outbound channel for B2B, but the automation layer is increasingly commoditized. The real differentiation is happening in content, not connection requests.

For content and ads, the most interesting shift is the convergence of outbound and paid. One agency documented a campaign where they ran cold email and thought leader ads against the same account list simultaneously. The outbound created awareness. The ads reinforced credibility. The combined motion generated $7.8 million in pipeline with a 15x return on $233,000 in ad spend. The insight is that 80% of their ad budget goes to thought leader ads (founder-face content) rather than brand ads. This performs better because it does not feel like advertising. It feels like a smart person sharing their perspective, which is exactly what it is.

The action layer’s future is multi-channel by default. Not “we do email and sometimes LinkedIn” but orchestrated sequences where the same account receives coordinated touches across email, LinkedIn, content, and ads within the same buying window. The teams doing this are seeing 3-5x the pipeline of single-channel outbound.

Layer 4: Orchestration

This is the layer that changed the most between 2025 and 2026. And it is the layer where the architecture’s future is being written.

Orchestration is the connective tissue. It answers: when a signal fires, what happens next? When enrichment completes, where does the data go? When an email bounces, what is the fallback? When a prospect replies, how does the handoff work?

In 2024, orchestration meant Zapier. Simple if-then automations connecting SaaS tools. It worked for basic workflows but broke down the moment you needed conditional logic, error handling, or multi-step sequences with branching.

In 2025, the orchestration layer shifted to more capable workflow platforms. In the survey data, one open-source automation tool reached 48% adoption among GTM leaders. It offered what the simpler tools could not: complex branching, AI nodes for decision-making, self-hosted deployment for data control, and a visual builder that technical operators could extend with code.

In 2026, a second orchestration paradigm is emerging alongside workflow builders. AI-native development environments paired with model context protocols are replacing multi-tool tab-hopping entirely. One bootstrapped services firm documented how their entire GTM operation runs from a single terminal. Campaign creation, data enrichment, CRM updates, client handovers, content generation, financial operations. All of it in one interface, connected to external services through standardized protocols.

This is not a marginal improvement. Traditional GTM workflows require an operator to context-switch between 6-8 browser tabs, copy-paste data between tools, and manually trigger each step. The terminal-native approach eliminates all of that. One practitioner demonstrated building a complete outbound campaign in under 20 minutes during a live session with 1,400 viewers. The same workflow in a traditional stack takes 2-4 hours.

The adoption numbers tell the story. Among the 62 GTM leaders surveyed, 29% reported using rapid application development platforms (sometimes called “vibe coding” tools) to build custom GTM tools. These are not developers. These are operators who can now build exactly the workflow they need instead of contorting their process to fit a vendor’s product decisions.

The orchestration layer is where the gap between “good” and “great” is widest. A team with strong signals, rich data, and solid action tools but weak orchestration will still operate at 30-40% of their potential. The orchestration layer is the multiplier.

Layer 5: CRM

The CRM layer is the system of record. It is the least exciting layer and the most politically fraught.

Here is what the data shows: the dominant CRM incumbent is losing share among the fastest-growing GTM teams. In the survey, a newer CRM built for modern workflows holds 32% adoption. That number would have been unthinkable three years ago. The shift is driven by three factors. First, the incumbent’s pricing scales punitively with team size, which hits startups and small agencies hardest. Second, the newer tools offer API-first architectures that integrate cleanly with the orchestration layer. Third, the UI is designed for operators, not administrators.

But the CRM layer’s real problem is not which tool you pick. It is how disconnected the CRM typically is from everything above it. In most stacks, the CRM is a data graveyard. Leads enter, stages get updated manually, and the feedback loop from “deal closed” back to “which signal triggered this lead” is nonexistent.

The best teams in 2026 are solving this by treating the CRM not as the destination but as one node in a feedback loop. When a deal closes, the attributes of that deal (source signal, enrichment data, outreach sequence, response patterns) feed back into the signal and data layers. The system literally learns which signals predict revenue. This is not machine learning in the academic sense. It is structured data flowing backwards through the stack to improve targeting upstream.

The CRM layer matters. But it matters less for what it stores and more for what it feeds back.

The Missing 6th Layer: AI Visibility

Here is where the architecture gets interesting. Every framework I have seen from GTM practitioners covers layers one through five. Signal, data, action, orchestration, CRM. It is a clean model that maps the outbound motion end to end.

But it is missing a layer. And the missing layer may end up being the most important one.

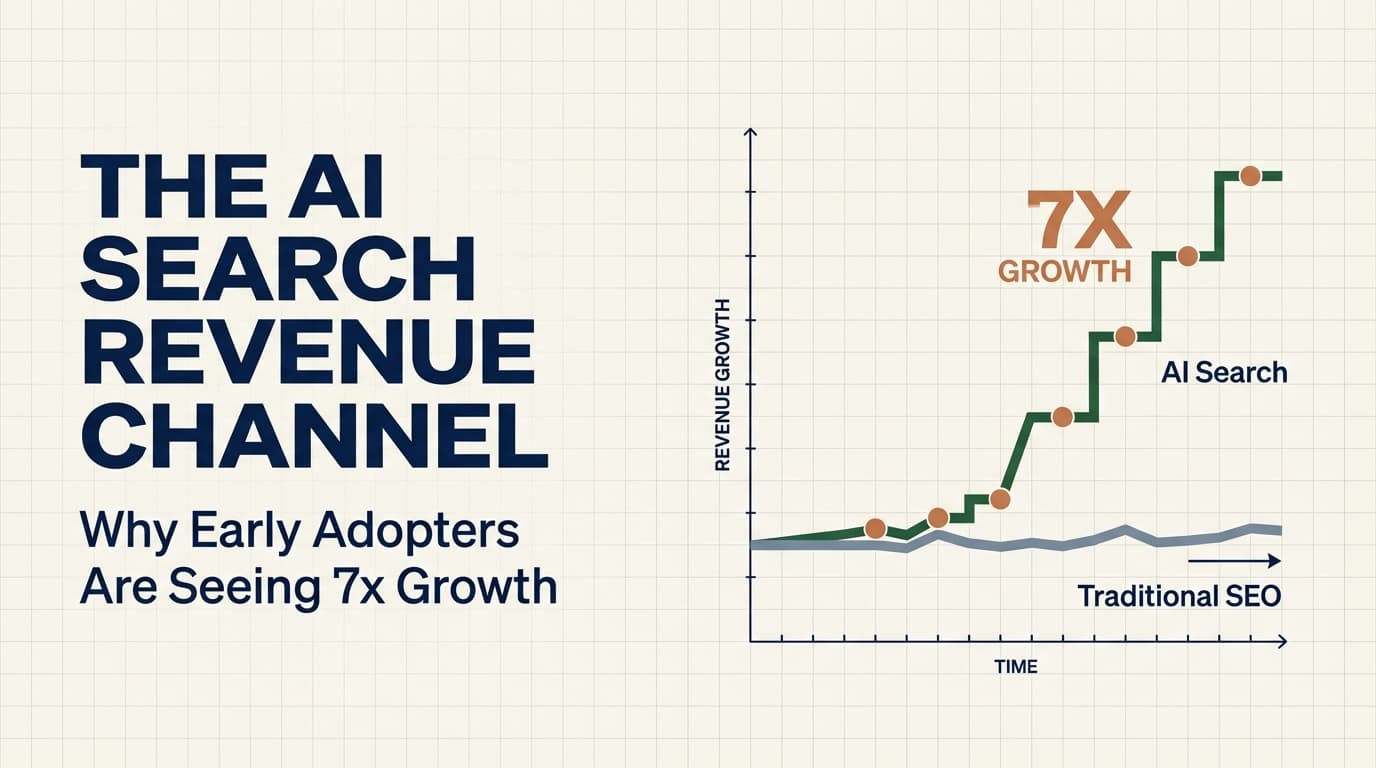

AI visibility. Some call it GEO (generative engine optimization). Others call it AI search optimization. The label matters less than the structural reality: large language models are becoming a primary research channel for B2B buyers, and your presence (or absence) in their responses is a revenue variable.

The numbers are early but striking. One GTM agency published their results from investing in AI search optimization: $506,000 in contract value sourced from AI search in four months. LLM-originated traffic grew 7.6x during that period. These are not vanity metrics. That is closed revenue tied directly to a channel that did not exist 18 months ago.

Another team built free tools on their website using AI-native development. Result: 100,000 visitors per quarter, with 30% coming from organic search. The tools themselves became lead magnets. They rank in traditional search and get cited in AI responses because they provide structured, useful answers to specific questions.

The AI visibility layer sits above the entire stack. It is not outbound. It is not inbound in the traditional sense. It is a new category: being present in the answers that AI systems generate when your prospects ask questions about the problems you solve.

Why does this layer belong in the revenue stack architecture? Because it feeds the signal layer. When a prospect interacts with an AI-generated response that mentions your company or links to your content, that interaction generates a signal. That signal feeds enrichment. Enrichment feeds action. The stack becomes circular rather than linear.

The teams that recognize this are building structured content specifically designed for LLM consumption. Long-form FAQ sections. Programmatic SEO pages that answer specific questions in formats LLMs can parse and cite. Technical documentation that demonstrates expertise on the exact queries their buyers ask AI assistants.

I would argue that within 18 months, AI visibility will be as standard a layer in the revenue stack as CRM is today. The teams building it now will have a compounding advantage that is nearly impossible to replicate quickly, because LLM citation patterns reward depth and consistency over time.

What Sits Between the Layers

The layers themselves are table stakes. Any team with budget and taste can assemble good tools at each level. The actual differentiation is in what connects them.

Three connective patterns separate compounding stacks from static ones.

The Data Cache Pattern

I mentioned the $30/month Supabase cache earlier, but the pattern extends beyond email verification. The best teams are building persistent data stores that sit between every layer transition. Signal-to-data: cache which signals have been processed and what they yielded. Data-to-action: cache which leads have been contacted, when, and through what channel. Action-to-CRM: cache response data before it enters the CRM so you can analyze patterns without querying a rate-limited CRM API.

The cache pattern transforms each layer interaction from a one-time event into a reusable asset. Every dollar you spend on enrichment contributes to a growing database that makes future enrichment cheaper. Every outreach campaign generates response data that improves future targeting. The cache is what makes the stack compound.

The API-First Pattern

There is a clear divide between teams that operate through graphical interfaces and teams that operate through APIs. The API-first teams run higher volume, make fewer errors, and iterate faster. This is not about technical sophistication for its own sake. It is about removing bottlenecks.

When your enrichment layer is API-connected to your orchestration layer, a new signal can trigger enrichment, score the lead, personalize a message, and queue it for sending without a human touching anything. When the same workflow requires clicking through four different browser tabs, the throughput drops by 10x and the error rate increases with every manual step.

The survey data shows this split clearly. The tools with highest adoption (the enrichment platform at 71%, the workflow tool at 48%) are the ones with the strongest API and integration ecosystems. Adoption correlates with programmability. If you cannot connect a tool to everything else in your stack through code, it will eventually get replaced by one you can.

The Feedback Loop Pattern

This is the pattern that defines whether a stack compounds or merely executes.

In a linear stack, data flows in one direction: signal triggers enrichment, enrichment feeds action, action updates CRM. Done. Every new campaign starts from scratch. You learn nothing structurally from the last one.

In a compounding stack, data flows backwards. Closed deals feed attributes back to the signal layer: which trigger events preceded this deal? Which enrichment data points correlated with conversion? Which outreach channels and messaging angles performed best for this ICP segment?

One agency built an AI onboarding system that processes new clients in 12 minutes instead of 4 hours. They then productized that system and sell it to other agencies. That is a feedback loop. The operational improvement became a product. The product generates revenue. The revenue funds more operational improvements.

Another example: the employee LinkedIn army playbook. One agency turned 24 employees into LinkedIn creators through an internal competition with cash prizes. The result: 581 posts, 27 new clients, $153,000 in monthly recurring revenue. The content creates awareness. Awareness feeds the signal layer with inbound interest. Inbound interest feeds the data layer with self-qualified leads. The stack closes on itself.

The teams that build all six layers with feedback loops between them are not just better at GTM. They are playing a different game. Their cost per lead decreases over time because the cache grows. Their conversion rate increases over time because the signal layer learns. Their content compounds because AI visibility rewards consistency. Every quarter, the gap between them and linear-stack operators widens.

What This Architecture Predicts

If this reference architecture holds, and the convergence data suggests it will, three predictions follow.

Tool consolidation will accelerate. The shift from “best stack” to “fewest tools connected properly” is not a trend. It is a structural inevitability. Every additional tool is a potential point of failure, a data silo, and a context switch. The winning stacks in 2027 will have fewer tools than the winning stacks in 2026, connected more deeply.

The orchestration layer will eat the action layer. As AI-native development environments mature and model context protocols standardize, the distinction between “tool that sends emails” and “tool that orchestrates workflows” will collapse. Orchestration will subsume action. The workflow engine will send the email, post the LinkedIn message, and trigger the ad. The standalone action tools will either become API endpoints consumed by orchestration layers or they will lose market share.

AI visibility will become the primary top-of-funnel for B2B. Not in 2026. Probably not fully in 2027. But the trajectory is clear. When 7.6x traffic growth happens in four months from a channel that barely existed before, that is not a blip. That is the early slope of an adoption curve. The teams that build their AI visibility layer now, while it is still relatively easy to establish presence, will own positions that become exponentially more expensive to compete for later.

The Architecture Thesis

I have been building and studying revenue stacks for years. I have seen the tool-obsessed approach fail. I have seen the process-obsessed approach plateau. And I have seen the architecture-aware approach compound.

Here is what I believe: the teams that build all six layers of this stack, with the data cache, API-first connectivity, and feedback loops between them, will generate 5-10x the pipeline per dollar spent compared to teams running a two or three-layer stack.

Not because they have better tools. Because they have a system.

A system that gets smarter with every campaign. A system where the cost of acquiring the next customer decreases over time instead of increasing. A system where content, outbound, and AI visibility reinforce each other instead of operating in silos.

Most teams will not build this. Most teams will buy a couple of tools, stitch them together with manual processes, and wonder why their cost per lead keeps rising. That is fine. The architecture is not for most teams.

It is for the ones who understand that revenue is engineered, not hacked. That compound advantages come from structural decisions, not tactical ones. And that the difference between executing and compounding is the difference between a stack of tools and a system that learns.

Build the system.

Enjoying this essay?

Written by

Elom

GTM and Growth engineer with 12 years across Fortune 500s, fintech, and B2B startups. Building at the intersection of AI, data, and revenue.

Get the next deep-dive in your inbox

Essays on GTM, growth engineering, and what's actually working. Free.