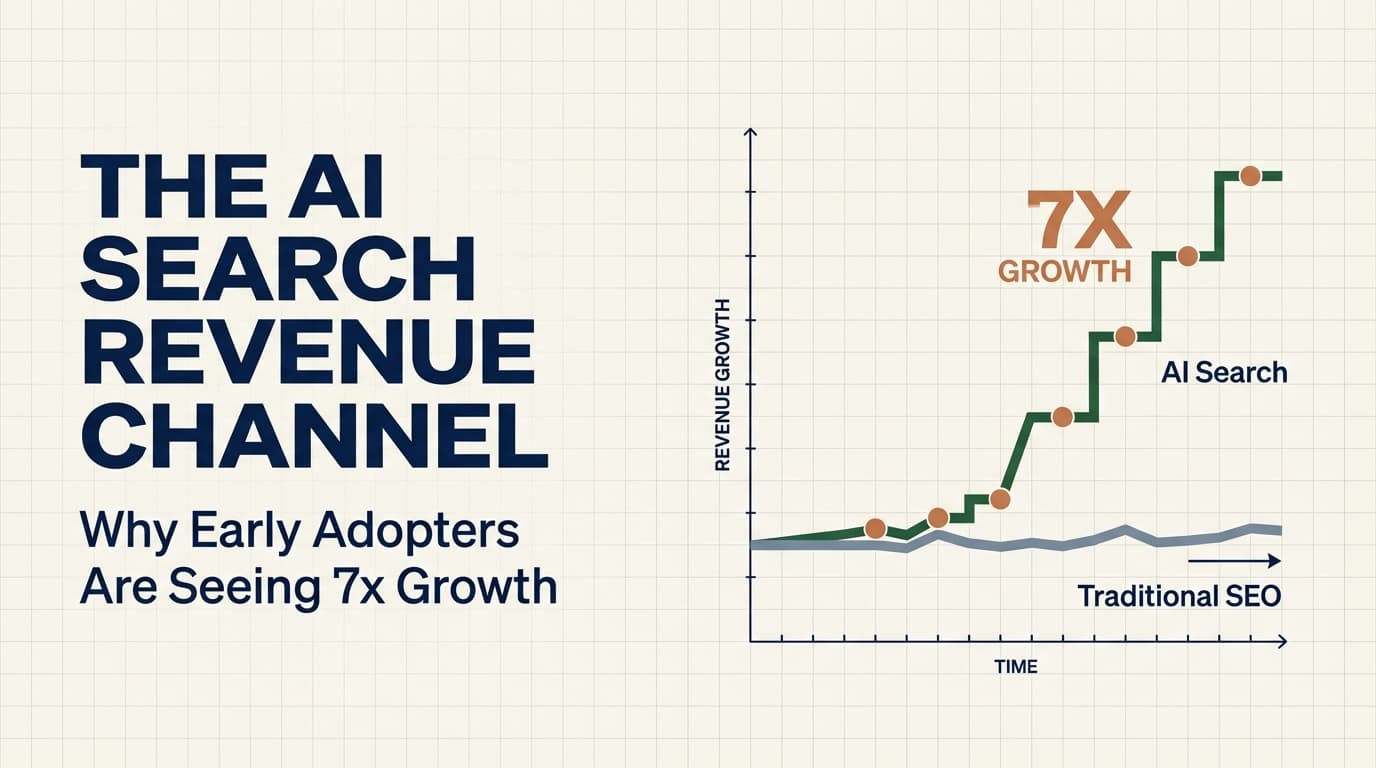

The AI Search Revenue Channel: Why Early Adopters Are Seeing 7x Growth

One agency reported $506K in contract value from AI search in 4 months with 7.6x LLM traffic growth. AI search is becoming a real pipeline source. But most teams are treating it as SEO 2.0 instead of a fundamentally different channel.

One GTM agency published their AI search numbers last month. $506K in contract value attributed to LLM-driven traffic. In four months. Their LLM traffic grew 7.6x over that same period. These are not projections or thought experiments. These are revenue numbers tied to a specific channel that most GTM teams have not even started building for.

I have been tracking the emergence of AI search as a pipeline source for the past year. The data is starting to move from “interesting signal” to “real channel.” And the gap between teams who are investing now and teams who are waiting for best practices is widening fast.

The Channel Nobody Is Building For

Here is what is happening beneath the surface. When a buyer asks ChatGPT, Perplexity, Claude, or Gemini a question about a product category, the LLM does not just guess. It pulls from indexed content, synthesizes an answer, and in many cases provides citations. Those citations drive traffic. That traffic converts.

The $506K number I mentioned came from a team that recognized this early and restructured their content specifically for LLM consumption. They did not just optimize their existing blog posts. They built a content architecture designed to be cited by AI models.

This is not SEO 2.0. Treating it as an extension of your existing search strategy will produce mediocre results because the mechanics are fundamentally different. Traditional SEO optimizes for ranking in a list of ten blue links. AI search optimization, or what the industry is calling GEO (Generative Engine Optimization), is about being the source an LLM chooses to cite when synthesizing an answer.

The difference between ranking tenth in Google and not appearing at all is a few spots on a page. The difference between being cited by an LLM and being invisible is binary. You are either in the answer or you are not.

Why GEO Is Not SEO With a New Name

The fundamental unit of value in traditional SEO is the page. You write a page, optimize it for a keyword, build links to it, and hope Google ranks it. The searcher clicks through, lands on your page, and then you have a chance to convert them.

The fundamental unit of value in GEO is the answer. Specifically, a self-contained, authoritative, clearly structured answer that an LLM can extract and cite. The content still lives on your website. But the value is generated at the moment the LLM decides your content is the best source for its synthesized response.

This creates three strategic shifts that most teams are not accounting for.

Shift 1: From keyword density to entity authority. LLMs do not match keywords. They evaluate the authority of a source on a topic. A page that mentions “outbound sales automation” 14 times is doing keyword optimization. A page that comprehensively explains outbound sales automation with specific frameworks, real metrics, named methodologies, and clear definitions is building entity authority. The LLM picks the second one.

Shift 2: From page ranking to citation worthiness. In traditional SEO, your goal is to rank higher than competitors for a given query. In GEO, your goal is to be the source the LLM trusts enough to cite. This means your content needs to do something that other sources do not: provide original data, present a unique framework, offer a specific example, or state a clear position. Generic content that restates what ten other pages say will never be cited because the LLM has no reason to prefer it.

Shift 3: From traffic volume to answer presence. The metric that matters in GEO is not how many people visit your page. It is how often your content appears in LLM-generated answers. A page that gets 50 organic visitors but is cited in 500 LLM responses has more downstream value than a page that gets 5,000 organic visitors but is never referenced by an AI model. The 500 citations put your brand in front of buyers at the exact moment they are evaluating solutions.

The FAQ Strategy That Is Actually Working

Here is the specific tactic that is producing results right now. Teams that restructure their content into structured question-and-answer formats are seeing disproportionate LLM citation rates.

This makes intuitive sense. LLMs are trained to answer questions. When a user asks an LLM a question and there is a web page that contains that exact question with a comprehensive, authoritative answer, the LLM has a clean extraction target. It can pull the answer, attribute the source, and provide the citation.

One agency is positioning structured FAQ content as their primary GEO competitive moat. Not FAQ pages in the traditional “bury them in the footer” sense. Strategic FAQ architectures where every significant question a buyer might ask is answered comprehensively on a dedicated, well-structured page.

The anatomy of a GEO-optimized FAQ entry looks like this:

Clear question as an H2 or H3 heading. The question should match natural language queries, not keyword-stuffed variations. “How much does enterprise outbound cost per meeting?” not “Enterprise Outbound Cost Pricing Guide 2026.”

Comprehensive answer in the first paragraph. LLMs extract from the top of the content block. If your answer starts with three paragraphs of context before getting to the point, the LLM will either skip you or extract the context instead of the answer. Lead with the answer. Expand below it.

Specific data within the answer. Numbers, percentages, price ranges, timelines. LLMs preferentially cite sources that provide specific data points because specificity signals authority. “Enterprise outbound typically costs $85-$200 per qualified meeting depending on ICP complexity and channel mix” is far more citable than “costs vary depending on your situation.”

Entity-rich supporting content. Mention specific tools, methodologies, frameworks, and concepts by name. LLMs use entity recognition to evaluate topical authority. A page that discusses outbound in the context of Clay, signal-based targeting, multi-channel sequencing, and buying intent scores reads as more authoritative than a page that speaks in abstractions.

Schema markup. FAQPage schema tells both traditional search engines and LLM crawlers exactly what the question-answer pairs are. It is metadata that makes extraction trivially easy. Teams running FAQPage schema on their structured Q&A content are seeing measurably higher citation rates.

The Content Architecture for GEO

The teams seeing 7x LLM traffic growth are not just adding FAQ sections to existing pages. They are building a content architecture specifically designed for AI search visibility. Here is what that architecture looks like.

Layer 1: Topic Authority Pages. One comprehensive page per major topic in your domain. Not a 500-word blog post. A 2,500 to 4,000 word authoritative treatment that covers the topic from first principles through advanced application. These pages establish your domain as an authority on the topic, which influences how LLMs weight your content across all related queries.

Layer 2: Structured Q&A Pages. Dedicated pages that answer 10 to 20 specific questions within each topic cluster. Each question gets its own heading, its own comprehensive answer, and its own schema markup. These are the extraction targets. When a buyer asks an LLM a specific question, these pages are what get cited.

Layer 3: Data and Framework Pages. Original research, proprietary frameworks, benchmark data, and case studies with specific numbers. LLMs heavily favor original data because it cannot be found elsewhere. If your content contains data that 15 other pages also have, the LLM has no reason to cite you specifically. If your content contains data that only you have, the citation is yours.

Layer 4: Comparison and Decision Pages. Content that directly addresses buyer evaluation queries. “What is the difference between X and Y?” “Which approach is better for Z use case?” “How does this work compared to that?” These pages capture high-intent queries where buyers are actively making decisions. LLMs surface these in response to comparison and evaluation prompts, which are among the highest-converting query types.

Layer 5: Entity Definition Pages. Clear, authoritative definitions of concepts, terms, and methodologies specific to your domain. When an LLM encounters a term it needs to define or explain, it reaches for the most authoritative definition it can find. If you define the terminology in your space, you get cited every time the LLM references those concepts.

The Measurement Problem (And How to Solve It)

The biggest barrier to GEO investment right now is measurement. Traditional analytics cannot easily track “how often was my content cited by an LLM.” The $506K attribution I referenced earlier was possible because the team built custom tracking for LLM referral traffic, which shows up differently in analytics than traditional organic search.

Here is what you can track today.

LLM referral traffic. Traffic from ChatGPT, Perplexity, and other AI models shows up in your analytics with distinct referrer strings. Set up filtered views to isolate this traffic. Track it separately from traditional organic. Most analytics platforms now recognize these referrers, but you may need to create custom channel groupings.

Citation monitoring. Regularly query the major LLMs with questions related to your domain and track whether your content is cited. This is manual and tedious, but it is the most direct measure of GEO performance. Some early-stage tools are automating this, but the space is immature. For now, build a weekly cadence of 20 to 30 queries across ChatGPT, Perplexity, Claude, and Gemini, and log which of your pages appear in the responses.

Content extraction rates. If you are running FAQPage schema and structured Q&A content, track which pages are receiving traffic from LLM referrers. Compare this against pages without structured formatting. The delta tells you how much your content architecture is contributing to GEO visibility.

Pipeline attribution. This is the hard one. Connect LLM referral traffic to your CRM to measure actual pipeline influence. The $506K number was possible because the team tracked the full journey from LLM referral visit to conversion event to deal close. Without this connection, you are measuring traffic, not revenue.

The Competitive Window

Right now, GEO is where SEO was in 2010. Most teams know it matters but are not doing anything about it. A small number of early movers are building content architectures specifically for LLM visibility and seeing outsized returns because the competition is thin.

This window will close. As more teams recognize that AI search is a real pipeline source, the competition for LLM citations will intensify. The teams that build their content architecture now, while the space is relatively uncontested, will have a compounding advantage. Their content will have more authority signals, more citation history, and more entity depth than competitors who start later.

The $506K in four months is notable not just because it is a large number, but because it was generated in a low-competition environment. The same content strategy deployed into a saturated GEO market would produce smaller results. The early mover advantage here is real and measurable.

One team is already building free tools on their website that attract 100,000+ visitors per quarter, with 30% coming from SEO. Imagine those same tools optimized for LLM citation. The visitor number matters less than the answer presence. If 100,000 visitors come to your site but your content is also being cited in 500,000 LLM responses per quarter, the brand impression multiplier is enormous.

What Most Teams Are Getting Wrong

The most common mistake I see is treating GEO as an add-on to existing SEO strategy. Teams take their current blog posts, add a few FAQ sections, sprinkle in some schema markup, and call it done.

This does not work because the content was never designed for extraction. Blog posts written for traditional SEO are optimized for engagement and time on page. They use narrative structures, build suspense, bury the answer below the fold to increase scroll depth. These are anti-patterns for GEO. LLMs do not scroll. They extract. If your answer is in paragraph seven, it will not be cited.

The second mistake is ignoring original data. Most B2B content is derivative. It restates industry statistics, references well-known frameworks, and adds a thin layer of opinion. LLMs have already ingested all of that content. Citing any one source for commonly available information provides no value. The only content that earns citations is content that provides something the LLM cannot get from any other source.

The third mistake is optimizing for the wrong LLMs. ChatGPT, Perplexity, Claude, and Gemini all have different content indexing and citation behaviors. A strategy that works well for Perplexity might underperform on ChatGPT. Early GEO practitioners are testing across all four major models and tracking citation rates per model to understand where their content performs best.

What I Am Betting On

I am treating GEO as a standalone revenue channel, not an SEO add-on. The content architecture I described above, with topic authority pages, structured Q&A, original data, comparison pages, and entity definitions, is a separate investment from traditional SEO. It shares some infrastructure (same website, same CMS, some content overlap) but the strategy, measurement, and optimization loops are different.

My bet is that within 18 months, GEO attribution will be a standard line item in pipeline reports for B2B teams. The tools for measurement will mature. The playbooks will solidify. And the teams that invested early will have built content moats that are difficult to replicate because authority compounds over time.

The 7.6x LLM traffic growth one agency reported is not an anomaly. It is what happens when you build for a channel with low competition and high buyer intent. The buyers asking LLMs about your category are further along in their decision process than the ones typing broad keywords into Google. They want answers, not options. And if your content is the answer, you win the deal before your competitor even knows the buyer was looking.

The question is not whether AI search will become a significant pipeline channel. The data already says it will. The question is whether you will be positioned for it when the inflection point hits, or scrambling to catch up while your competitors collect the citations.

I would rather be early than right on time.

Enjoying this essay?

Written by

Elom

GTM and Growth engineer with 12 years across Fortune 500s, fintech, and B2B startups. Building at the intersection of AI, data, and revenue.

Get the next deep-dive in your inbox

Essays on GTM, growth engineering, and what's actually working. Free.